Duration: 7 months (Master’s thesis)

My Roles: UX Researcher, UX Designer, Prompt Engineer

Duration: 7 months (Master’s thesis)

My Roles: UX Researcher, UX Designer, Prompt Engineer

For my HCI master’s thesis, conducted at a leading European research institute, I researched, designed, and evaluated an AI-powered interface that streamlines information retrieval while supporting critical engagement with AI-generated content. I created a high-fidelity prototype, designed structured outputs for clarity, and conducted a mixed-methods study to assess usability, user experience, and interaction behaviors. This project deepened my ability to design for responsible AI use and offered insights into how interface features, user expectations, and contextual factors shape interactions with AI systems in real-world contexts.

Note: Some project details have been generalized or omitted to respect confidentiality agreements.

AI systems are increasingly used to support tasks like document retrieval, summarization, and question answering [1, 2]. Their appeal lies in how they can make information access faster, easier, and more intuitive [3, 4, 5].

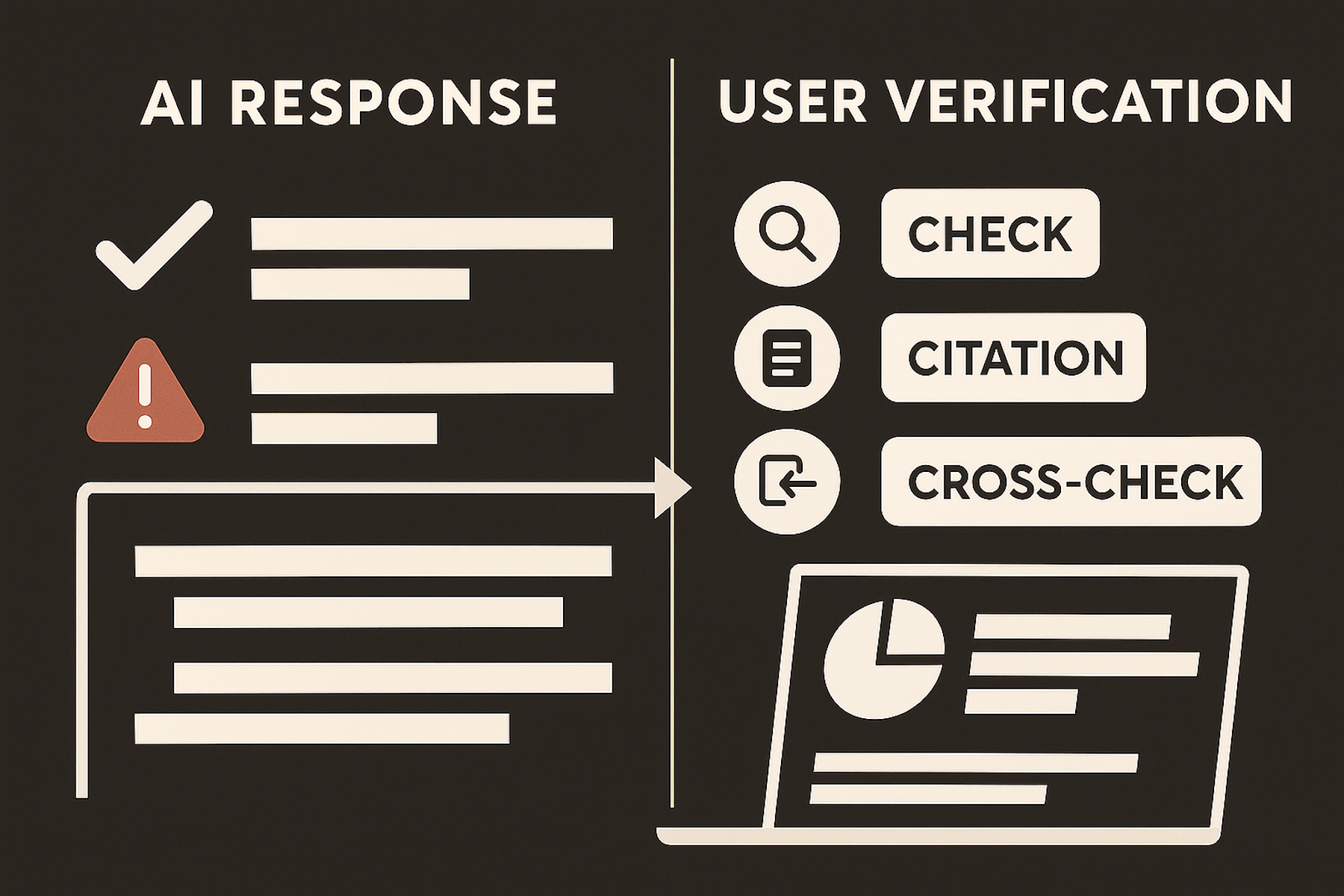

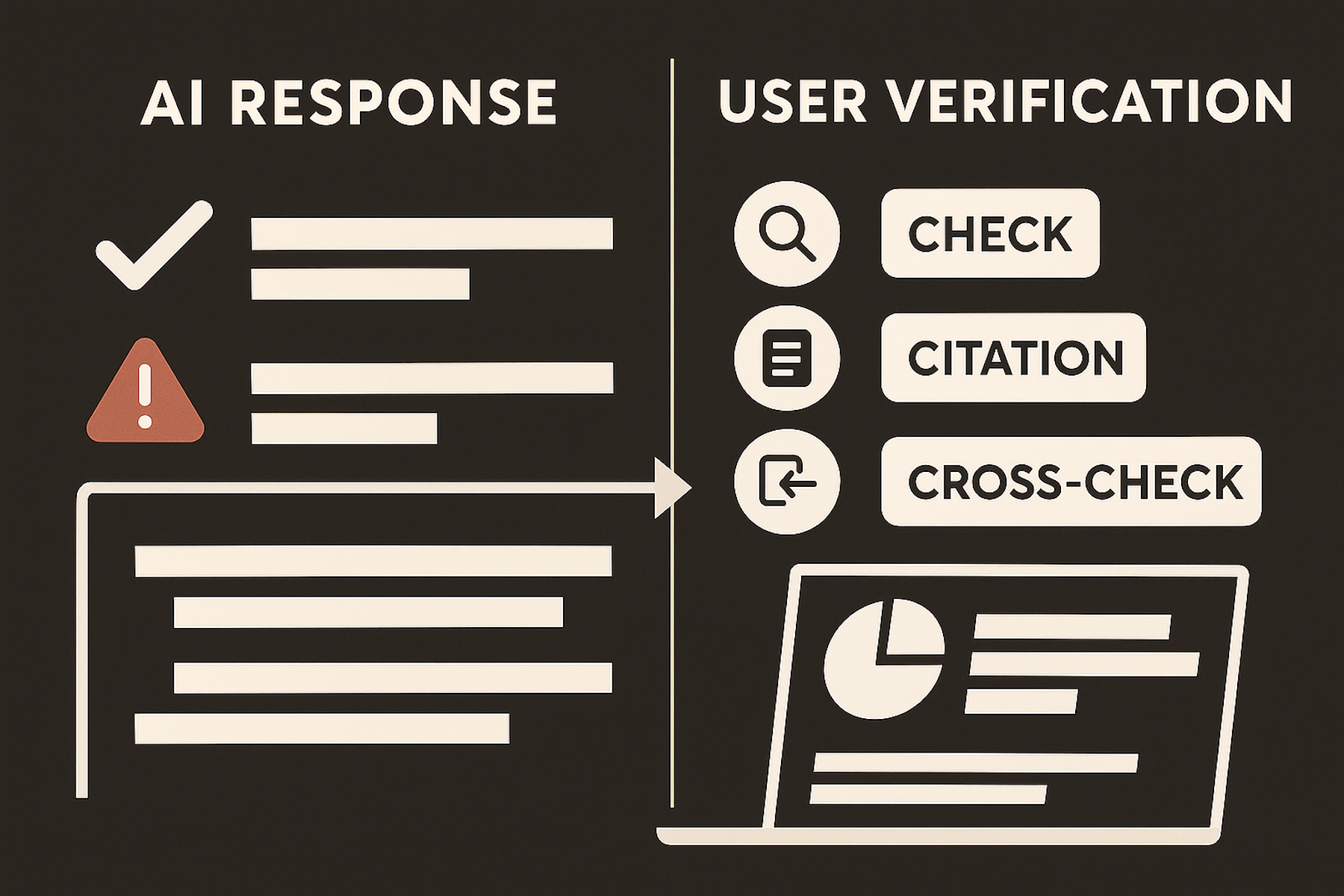

Yet alongside these benefits, generative AI carries significant risks. Its outputs are prone to factual errors, inconsistencies, and hallucinations [6, 7]. These issues often go unnoticed due to the models’ plausible-sounding responses, which can mislead users [8]. Furthermore, users may find it difficult to assess the reliability of AI-generated output, as many current systems lack effective support for verification.

To address these challenges, I designed an experimental AI-powered interface while exploring user interface and prompt strategies to support critical engagement. The goal was not only to present information clearly, but to encourage users to question and validate AI-generated responses through a combination of interface features and structured output design.

Collaborating closely with stakeholders, researchers, and developers, I created a prototype and engineered a custom prompt for the AI designed to support critical engagement with its content. The interface was designed to provide easy access to relevant information while also communicating system limitations to set realistic expectations.

The project began with a survey for employees to capture workplace search challenges and potential AI use cases. A thematic analysis showed that most employees wanted faster document access and clearer procedural guidance, which directly influenced the prototype’s emphasis on source visibility, a work-oriented interaction style, and a supportive tone.

To evaluate the prototype, I conducted a mixed-methods study combining observation, surveys, and interviews. This approach provided both quantitative and qualitative insights into usability, user experience, and how design features shaped user engagement.

I analyzed the data using established UX research methods, including the Critical Incident Technique, thematic analysis, and statistical analysis, to identify patterns in user behavior, usability pain points, and opportunities for improvement.

The evaluation demonstrated high usability and positive user experience scores. Participants frequently highlighted the interface’s clarity and ease of use, and feedback informed practical design guidelines for creating AI interfaces that encourage more thoughtful user engagement.

One of the most challenging yet valuable parts of engaging in this project was making sense of different types of data. Observation sessions, surveys, and interviews each revealed something slightly different about how people interacted with the tool, and sometimes these data did not fully align. I learned not to chase a single “truth,” but to look for bigger patterns that helped me understand the full picture.

I also came to appreciate the practical side of working with real people. Scheduling and running sessions required a lot of flexibility—people had competing responsibilities, last-minute changes, and limited time. It reminded me how important it is to design research that respects these limitations.

Collaborating with the development team also pushed me to think more carefully about what’s technically feasible. Some features had to be simplified or left out entirely due to time or technical constraints. That forced me to focus on priorities, like understanding what really supports users, what’s essential for transparency, and what can be adjusted without compromising the experience.

Overall, this project deepened my understanding of what it takes to create AI tools that are both responsible and genuinely user-centered.